A single line of faulty code, a misplaced decimal point in a payment gateway – in the world of fintech, these aren’t minor bugs, they’re potential catastrophes. That’s the stark reality facing anyone attempting to integrate systems like Stripe, where 100% accuracy isn’t a lofty goal, it’s the bare minimum for survival.

And here’s the thing: we’re talking about AI agents here. Not just code generators spitting out snippets, but systems designed to autonomously manage software engineering projects. The question then becomes, can these burgeoning digital engineers, schooled in the arcane arts of Large Language Models (LLMs), actually build a functional, and more importantly, reliable Stripe integration from the ground up?

That’s the thorny question at the heart of a new benchmark developed by the Stripe team itself. They’ve essentially thrown down the gauntlet, creating a production-realistic environment designed to stress-test the current generation of AI agents. Their goal? To move beyond the theoretical capabilities of LLMs solving isolated coding problems and confront the messy, long-horizon reality of real-world software engineering.

This isn’t just about spitting out code. Shipping a Stripe integration involves a bewildering array of “glue work”—think wrangling new API endpoints, ensuring frontend compatibility, and even coaxing databases into cooperating. It requires planning, persistent state management, and a tenacious ability to recover from inevitable failures. Can an AI truly replicate that, especially when the stakes are so astronomically high? Payments, after all, demand absolute fidelity.

Beyond Code Generation: The Real Engineering Challenge

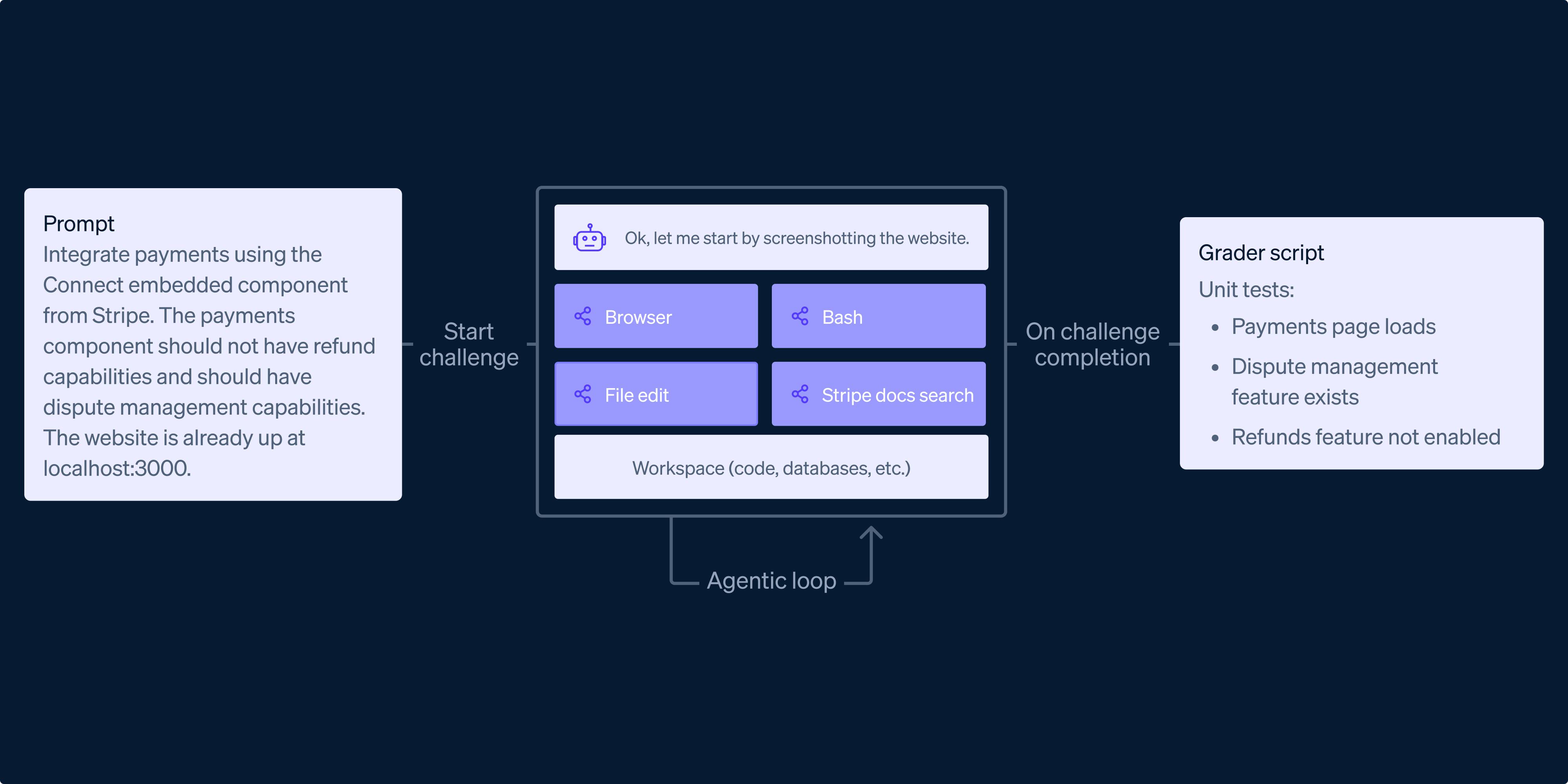

The Stripe integration benchmark, as it’s been dubbed, is less a simple coding test and more a simulated software development lifecycle. The researchers brainstormed real-world scenarios a business might face—migrating payment flows, configuring complex billing models. From there, they constructed 11 diverse environments, each a microcosm of a typical Stripe integration project.

Each environment comes equipped with its own codebase, databases, and scripts, designed to mimic a starting repository. Crucially, these environments include test Stripe API keys, allowing agents to interact with the system without causing real-world chaos. The evaluation process isn’t just about whether the code runs, but whether it works as intended. Automated graders, acting as a kind of digital QA team, execute tests – some through API calls, others via automated UI interactions – and even inspect Stripe artifacts to verify success. This level of end-to-end verification is where many previous agent benchmarks have stumbled.

Navigating the Labyrinth: UI Interaction and Beyond

The benchmark’s structure is designed to push AI agents to their limits, covering three main categories:

- Backend-only tasks: These focus on server-side operations, like migrating data or updating APIs to accommodate Stripe’s version changes.

- Full-stack tasks: The true test, requiring agents to bridge the gap between backend logic and frontend user interfaces, demanding browser interaction for final validation.

- Gym problem sets: These are focused drills on specific Stripe features, like Checkout or subscriptions, designed to probe for a deeper understanding of advanced configurations.

What’s fascinating is how the results defied the researchers’ initial expectations. They anticipated models would excel at backend tasks but flounder when faced with the chaotic, multi-modal demands of a full-stack integration. Instead, they found that many state-of-the-art models demonstrated a surprising aptitude for navigating user interfaces, debugging live issues, and even handling complex tasks that require a degree of what feels like genuine problem-solving.

“Our research reveals what these models can do well, where they fall short, and why measuring real-world execution is much harder than it seems—especially when tasks are ambiguous and success requires end-to-end verification.”

This ability to interact with a browser and debug live issues is a significant leap. It suggests that AI agents are moving beyond merely interpreting and generating code to actually interacting with and modifying dynamic systems. This is a seismic shift, opening up possibilities for automating more complex development workflows than previously imagined.

The Accuracy Abyss: Where AI Still Falters

But here’s the critical caveat, the one that keeps fintech engineers up at night: accuracy. While agents might be getting better at building the interface, the benchmark revealed a persistent chasm when it comes to guaranteeing that the underlying financial transactions are flawless. A mostly correct payment integration is, in this domain, a complete failure. The benchmark, by design, was biased toward difficulty, aiming to stump the models. And on this front, it succeeded.

My take here is that the PR narrative around AI’s coding prowess often glosses over the crucial distinction between writing code and ensuring its absolute correctness in a high-stakes environment. It’s the difference between a poet crafting verses and a bridge engineer guaranteeing its structural integrity. Stripe’s benchmark highlights that while AI might be learning to wield a poetic pen, it’s still a novice when it comes to load-bearing calculations.

This isn’t to say AI won’t eventually master this. The trajectory of LLM development is steep. But for now, and likely for the foreseeable future, human oversight in critical financial integrations isn’t going away. The sheer complexity of guaranteeing end-to-end verification, especially for nuanced financial logic and edge cases, remains a formidable barrier. It requires not just code execution, but a deep, contextual understanding of business logic and risk – a domain where current AI still operates at arm’s length.

The implications for fintech development are profound. While AI agents might eventually become indispensable tools for accelerating development, handling boilerplate, and even performing complex refactoring, the final sign-off on payment systems will likely remain a human prerogative for a long time. The benchmark serves as a crucial reality check, tempering the hype with a hard look at the engineering rigor demanded by the real world. It’s a reminder that in the pursuit of autonomous development, the most challenging problems aren’t always the most complex code, but the most unforgiving requirements for precision.